We all know what sound ‘reproduction’ is: put on a CD, or stream some music, and the system reproduces what was captured in the first place. So why – and we do it all the time – use the word ‘reproduction’ for live sound? Surely, it’s already there?

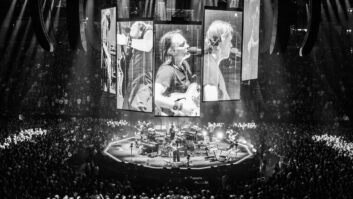

This is the conundrum facing concert venues. PA systems have always attempted to reproduce the sound on stage and deliver it throughout the venue to every member of the audience, at least in theory. The system installed is meant to be the optimum way of doing that for each particular space, and we’ve got quite good at it. However, the apostles of immersive audio are saying, in 2018, that we can do it even better.

Hang on: audiences are already immersed; why immerse them a bit more? The main rationale is that conventional stereo (left-right speaker hangs or stacks) is already a compromise; a reduction of the acoustic experience made necessary by the bottleneck of mixed channels, loudspeaker drivers and their sculpted exits. If these systems can be liberated from their narrow confines, live sound can be reproduced far more accurately – for every single seat in the house.

Convincing soundscape

The dominant technique now proposed by a handful of industry leaders has come to be known as object-based audio, a vague term for various DSP algorithms that release every sound from its given channel and renders it into an adjustable spot source – a benign free radical of the sonic metabolism. When decoded in a compatible audio system the objects describe a convincing three-dimensional soundscape, and the only real challenge to concert venue practice is the resulting need for more loudspeakers in the budget.

The protagonists are up and running. Dutch start-up Astro Spatial Audio (ASA) has a digital signal processor it calls a ‘rendering engine’ – SARA II. It employs ASA’s Spatial Sound Wave (SSW) algorithm and a software suite to build sound reinforcement systems extending from up to 64 MADI channels or 128 Dante network streams, with up to 32 inputs: the objects spread out from this framework.

Not surprisingly, given that extra speakers are required, three of the leading speaker manufacturers have joined the cause: Meyer Sound markets Constellation, a means of redrawing acoustic boundaries with some impact on an audience’s sense of immersion; and the Space map multichannel surround panning software. L-Acoustics has the all-embracing L-ISA format with specific live sound inventory; and d&b audiotechnik has launched its own hardware and software solution called Soundscape.

Elsewhere there may be smaller-scale concert hall applications for Sennheiser’s AMBEO platform, a digital upgrade to the 40-year old experiment Ambisonics and already well known in museums and other installations, and sometimes they will deploy more compact monitoring options such as those supplied by Genelec, among others. Genelec, by the way, has just published a handy guide to immersive audio principles, formats and solutions, so there really is no excuse. Indeed, all of the DSPs are compatible with any networkable speaker, but you can guess which ones are optimised along branded lines.

All of them promise more granular reproduction, with scalability a crucial consideration. From their speaker systems, concert venues require the opposite principle to children: they must be heard and not seen. And, in some cases, not really ‘heard’ at all: the subtle accentuation of artificial sound with no apparent means of support is a Wagner junkie’s wet dream. So: the larger the venue, the greater the challenge of melting your sound reinforcement into the background.

This is where the new generation of immersive audio systems should win friends. Because each individual speaker element – array or point source – plays a smaller part, relatively, it needs lower SPLs, less grunt and even less physical size: surely even the most sensitive artistic director must wiggle his tailcoat in glee at the thought. Furthermore, the first wave of immersive sound systems to have any real impact on concert venues will be 180° in orientation – not 360° at all.

Degree ceremony

A 180° immersive sound system is confined to the horizontal plane more or less described by the proscenium arch concept of stage presentation. It spreads the stereo image across this plane in a way that FOH engineer Ben Findlay has described as the ‘Mona Lisa’ effect: the stereo sweet spot seems to follow you around the room like the enigmatic Renaissance icon’s famous eyes. For conventional music performance, this is enough to elevate the audience experience without getting tangled in the web of side-fills, in-fills, rear speakers and further intrusions upon fine civic architecture. The vertical plane is far more complex, so concert venues will naturally resist the extent to which 360° configurations are adopted until realistic benefits can be perceived.