Previously, Ian McMurray looked at 4K’s demand drivers and equipment challenges. In this final part, he discusses further requirements for making 4K an everyday reality.

Despite what some commentators would have you believe, there appears to be still much to be done before 4K becomes a single, coherent, deployable technology.

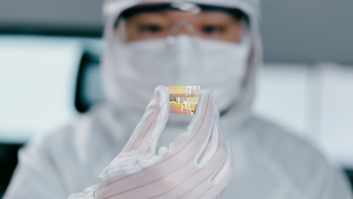

Higher pixel counts have given rise to the need for more sophisticated codecs in order to minimise bandwidth requirements. For most of the industry, HEVC/H.265 is the answer – but it, too, comes at a price: the significantly increased sophistication of the algorithm is said to require 10 times the computational power of the H.264 codec used in current 2K deployments – and the silicon to deliver that capability is, as yet, far from a commodity item.

“H.265 represents a significant technical challenge,” says Keith Watts, technical director at Cabletime. “The CPU performance required in PC-based software decoders is likely to keep 4K away from the corporate desktop for a while yet.”

Much also seems to hang on HDMI 2.0. “Better-informed potential customers will wait for the first ‘real’ HDMI 2.0 displays that will support higher bandwidth, colour depth and refresh rates as well as support for multiple audio/video streams over a single HDMI cable,” believes Stephan Vinke (pictured), product manager at Gefen Europe. “It’s similar to what we saw in the early years of HDTV.”

Martin Featherstone, CYP/audio visual product manager at CIE Group, tells a similar story. “To view a true 4K image on a display requires HDMI 2.0 to deliver a 50/60 frame rate with 10-12 bit deep colour,” he says. “4K resolution can still be achieved using a 1.4 HDMI cable, but that reduces the frame rate to 30/24 frames per second. HDMI 1.4 can be upgraded to achieve a frame rate of 60fps, but this reduces the colour ratio to 4:2:0.”

“As well as the bandwidth challenge, there’s the plug technology challenge,” adds Franck Facon, marketing and communications director at Analog Way. “The technology for signal management in input or output is only barely available to many manufacturers. HDMI 2.0 and DisplayPort 1.2 are not easy to implement. This is slowing the uptake of 4K resolution in the pro-AV corporate market. Norms and standards are always ahead of electronic components.”

The $64 million question is, of course: “To what extent is 4K technology deployable today?” The answer depends on who you talk to – and what you mean by 4K.

“4K is now being deployed as UHD, which is arguably not really 4K because it has neither the frame rate nor the colour performance of ‘real’ 4K,” opines Andy Fliss, director of marketing at TV One, who notes the importance of the consumer market for 4K in driving infrastructure. “Since bandwidth requirements are lower with 4K UHD, there are many options for manufacturers to create quick solutions using HDMI 1.4. Today there are no fully developed systems that can be used in pro-AV installations that fully support 4K 60Hz applications. We’re using reduced frame rates and refresh in order to squeeze the bandwidth into the current technologies. Most manufacturers are planning for HDMI 2.0, but full 4K silicon chipsets are not yet available, so complete solutions may still be some time away.”

“Like most transitions, the hype has come in advance of the delivery,” smiles Bill Schripsema, commercial product manager at Atlona. “We’re just beginning to see all types of infrastructure products available in 4K configurations – switchers, distribution amplifiers, scalers, extenders and so on – the chipsets to build the hardware to reliably manage the signals have only become available in recent months.” Atlona recently announced the AT-UHD-CLSO-612 up/down scaler supporting 4K sources and displays.

“The major IC manufacturers are working hard on this,” agrees Watts, “and the next 18 months should see the infrastructure challenge solved.”

The last word goes to Nick Mawer, UK marketing manager at Kramer Electronics. “4K is,” he says, “still a technology for early adopters.”

4K, then, is deployable – after a fashion. But 4K with all its bells and whistles is a little further away.

www.analogway.com

www.atlona.com

www.cabletime.com

www.cie-group.com

www.gefen.com

www.kramerelectronics.com

www.tvone.com